I’m having success with a similar procedure.

I perform bet2 on the meanb0 image post-eddy correction, then use the subsequent mask for dwibiascorrect. After this, I use dwi2mask on the biascorrected image.

For high b-value date, It’s worthy to note that this procedure only works when using -fsl argument into dwibiascorrect.

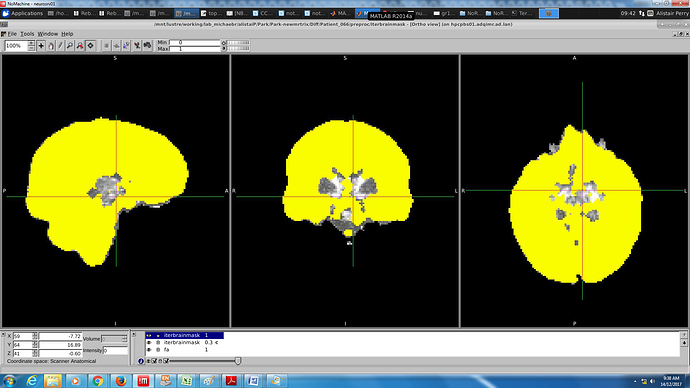

With ants correction:

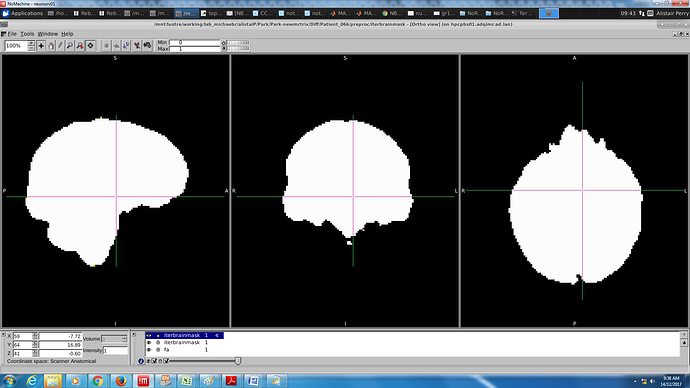

Now FSL: